Yuankai Luo (罗元凯)

Assistant Professor at Nanjing University

[Google Scholar] [Github] [Email: yuankailuo@nju.edu.cn]

I am currently an Assistant Professor at the School of Artificial Intelligence, Nanjing University (NJU). I received my Ph.D. degree from the School of Computer Science and Engineering at Beihang University, where I was supervised by Prof. Lei Shi and jointly trained at The Hong Kong Polytechnic University under the supervision of Prof. Xiao-Ming Wu. Before that, I did research supervised by Veronika Thost.

Driven by the philosophy of “Simplicity,” my research focuses on improving the efficiency and scalability of AI systems. My work has evolved from optimizing structural representation frameworks to developing efficient generative frameworks for Embodied AI and Scientific Discovery.

1. ModernGNN: Efficient Structural Representation

-

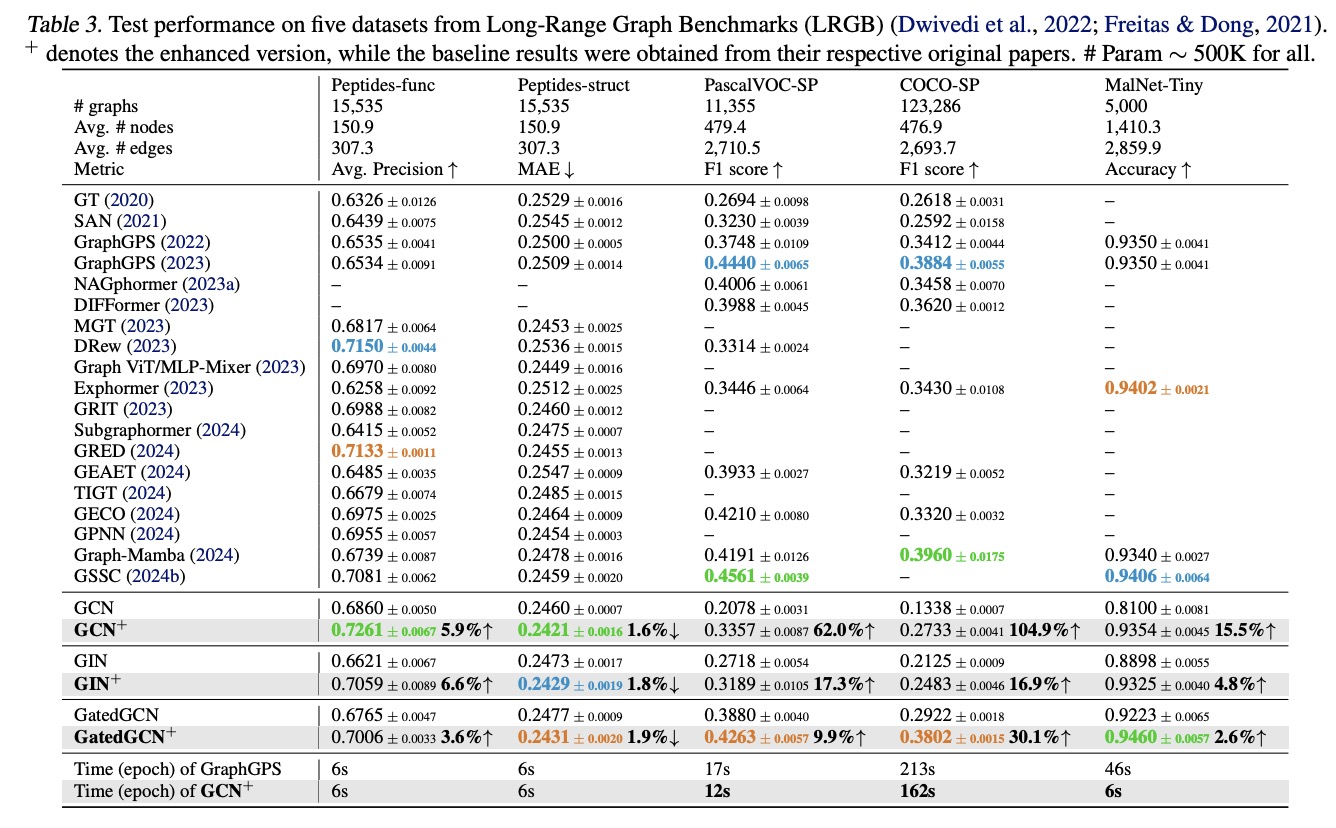

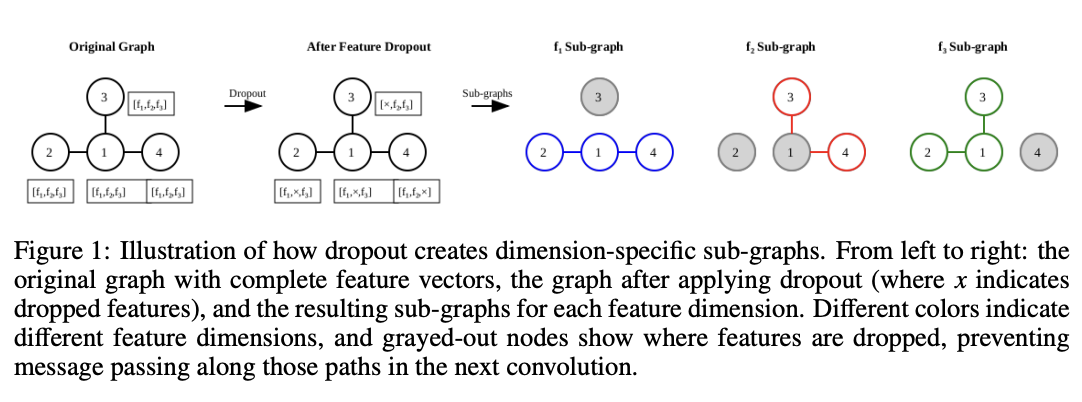

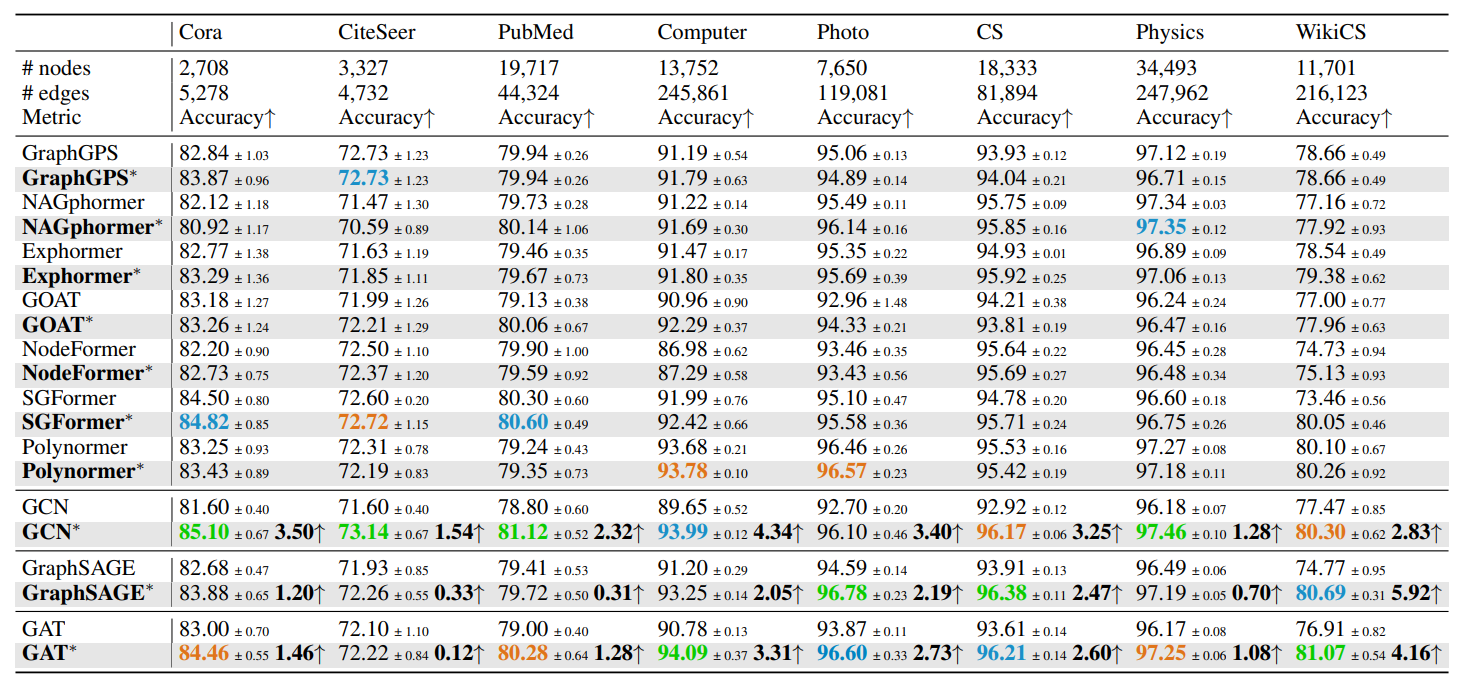

Systematic Architecture Refinement: developed ModernGNN (GNN+), a framework that integrates message passing and well-known regularization techniques like dropout. GNN+ demonstrates that the true potential of classic GNNs has been previously underestimated in both node-level and graph-level tasks, challenging the belief that complex mechanisms are necessary for superior performance in graph models [NeurIPS 2024, ICML 2025, ICLR 2025].

-

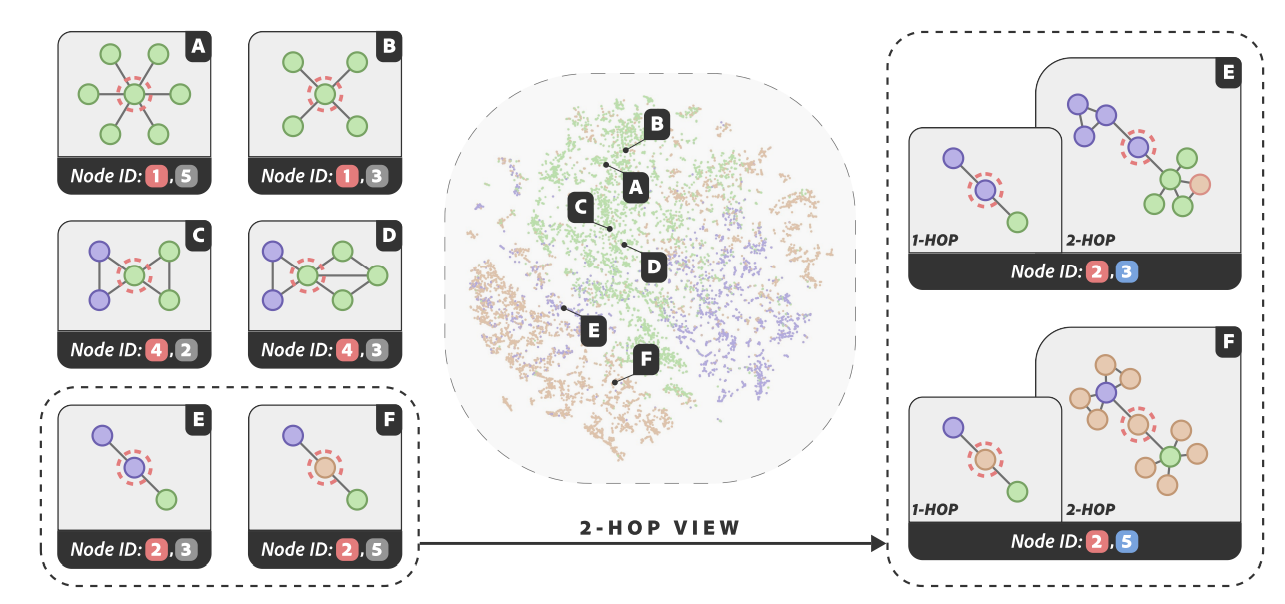

Neural Compression: introduced vector quantization to compress continuous node embeddings into highly compact (typically 6-15 dimensions), discrete (int4 type), and interpretable node representations—termed Node IDs [ICLR 2025].

-

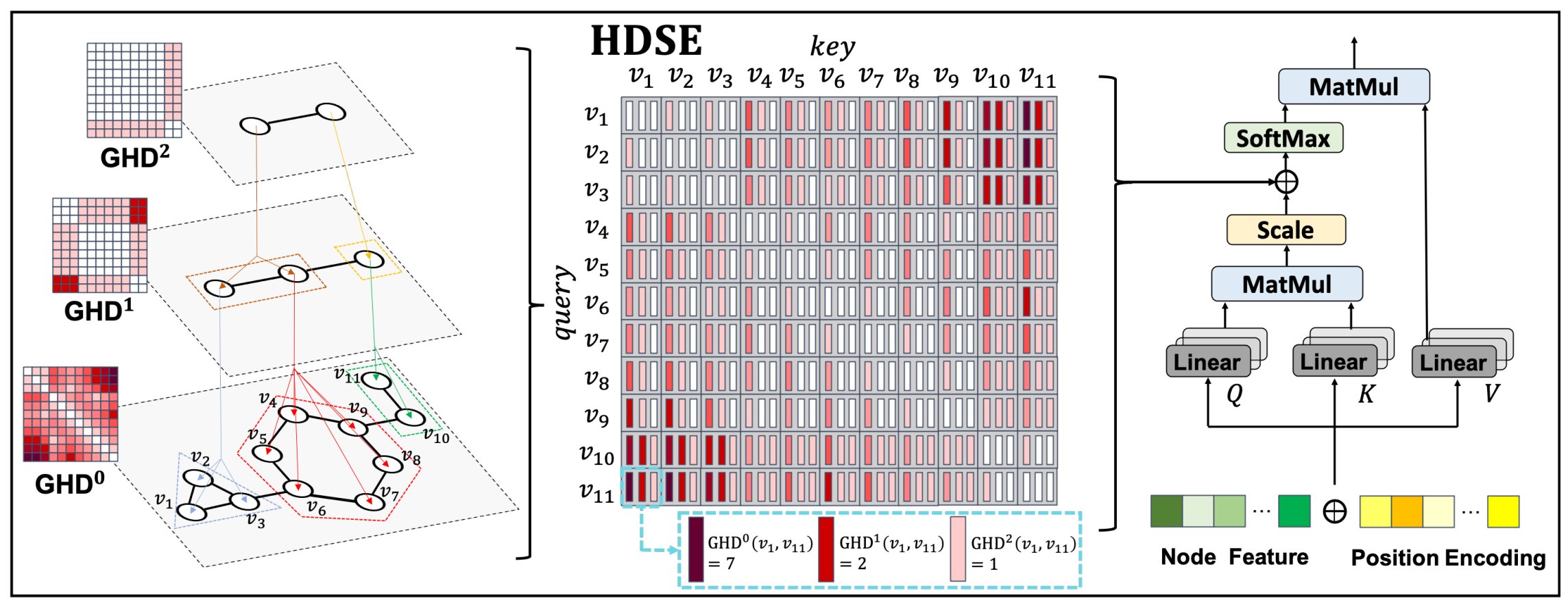

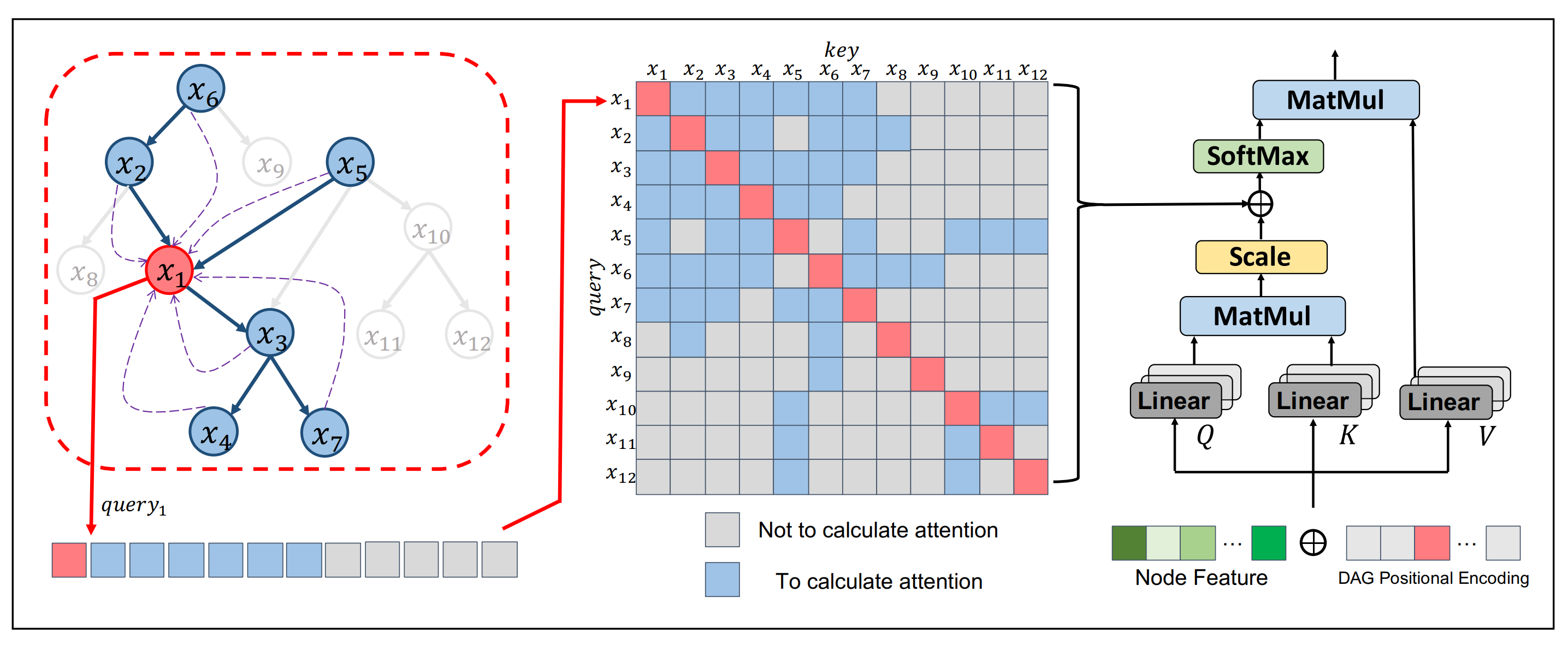

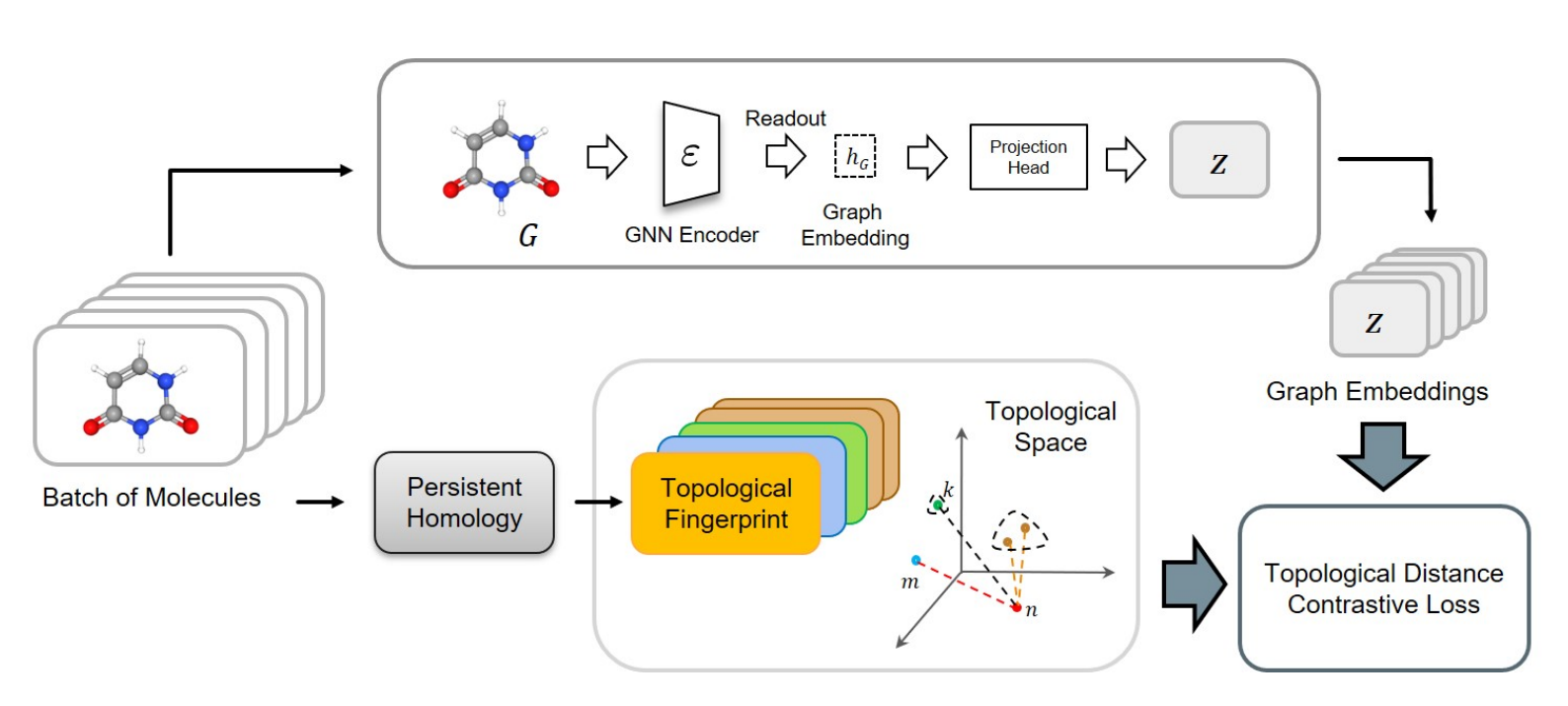

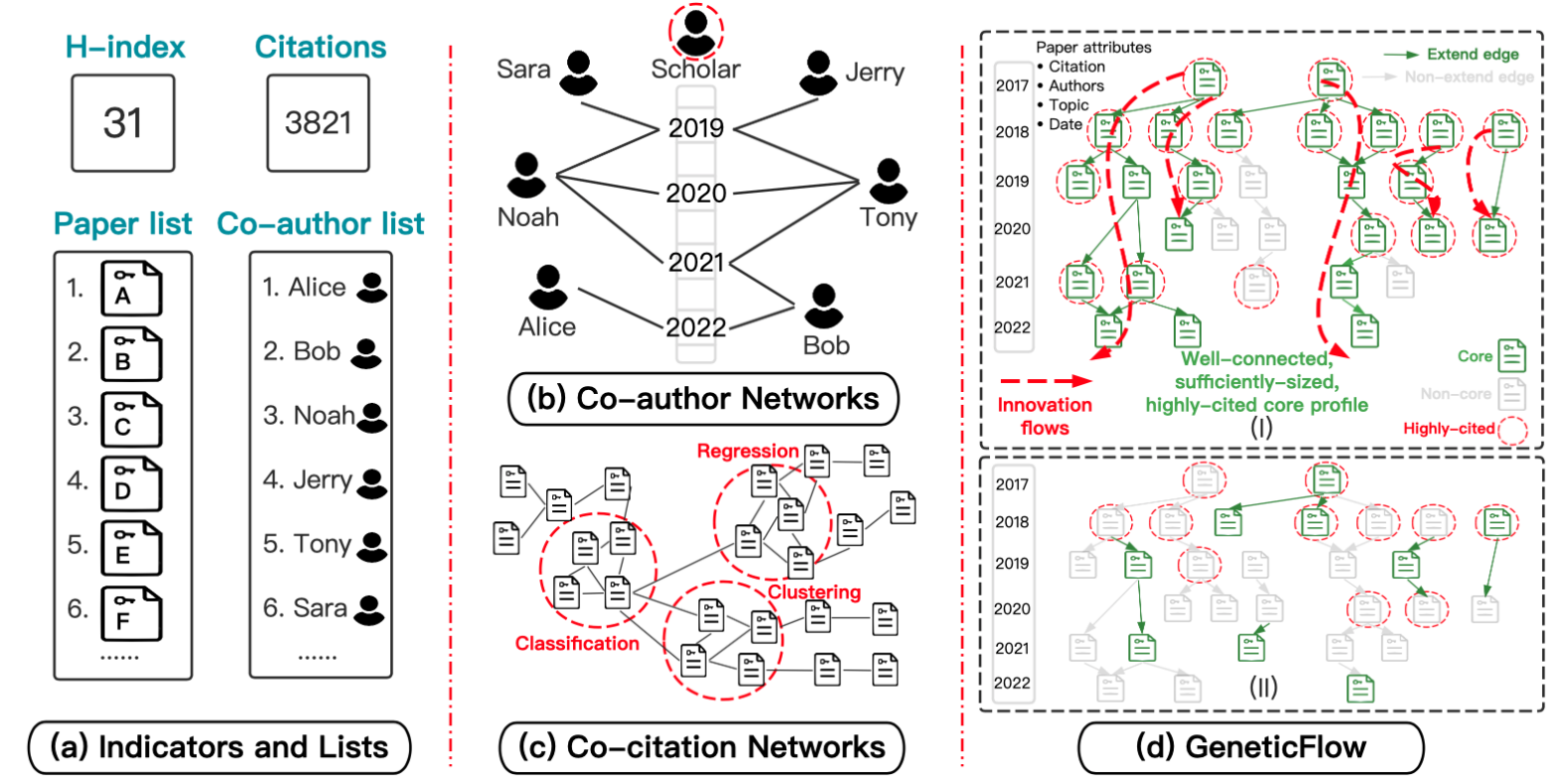

Specialized Architectures & Applications: designed Graph Transformers for complex topologies, including DAGs [NeurIPS 2023] and multi-level structures [NeurIPS 2024]. Applied these methods to practical tasks, such as molecular property prediction using persistent homology [NeurIPS 2023] and scholarly impact profiling [KDD 2023].

2. Sim-Series: Efficient Generative Frameworks

-

Robotic Action Generation (SimVLA): established a efficient Vision-Language-Action baseline for robotic manipulation by decoupling perception from control. It demonstrates that a streamlined architecture—centered on a standardized training recipe, standard flow matching, and a standard self-attention head—can perform robustly across diverse manipulation tasks.

-

Graph Generation (SimGFM): proposed a simplified Discrete Flow Matching framework for graph generation. By utilizing an endpoint-focused scheduler and eliminating task-specific heuristics, the approach reduces the required sampling steps for structural generation from hundreds to fewer than ten.

Recent Publications

Academic Services

Conference Reviewer:

- WSDM 2023/2024, ICML 2024/2025/2026, NeurIPS 2024(Top Reviewer Award)/2025, ACL ARR 2024/2025, ICLR 2025/2026, AAAI 2025